Generative video models lack internal 3D representations. When dynamic objects violate multi-view geometric constraints or static backgrounds exhibit structural inconsistencies, Structure-from-Motion (SfM) and 3D Gaussian Splatting (3DGS) pipelines break down, producing ghosting, floaters, and inconsistent geometry.

First, to address local dynamic object removal, we compare three approaches: baseline reconstruction, 2D heuristic correction using YOLOv8 segmentation and ProPainter inpainting, and interaction-aware causal scene rewriting with VOID. The experiments show that 2D inpainting methods hallucinate plausible textures but cannot account for physical interactions like shadows. Causal rewriting through VOID achieves a 57% improvement in perceptual quality (LPIPS: 0.169 → 0.073) by synthesising physically consistent counterfactual backgrounds that model downstream scene physics.

Second, to tackle global scene stabilization, we introduce a pipeline combining Contrast Limited Adaptive Histogram Equalization (CLAHE) and Bilateral Filtering. This approach resolves severe SfM geometric collapse on AI-generated static backgrounds, increasing 3D point extraction by nearly 290% and substantially improving final rendering fidelity (PSNR: 25.09 dB → 34.70 dB).

All post-hoc corrections still introduce artifacts, which suggests a mismatch between unconstrained 2D generation and the geometric consistency needed for 3D reconstruction.

Diffusion-based video synthesis models learn appearance statistics from 2D training data without developing 3D physical understanding. This limitation manifests in two critical ways: objects that move across frames violate multi-view geometric constraints, and seemingly static backgrounds exhibit structural inconsistencies, such as flickering textures and shifting specular reflections, that prevent robust 3D reconstruction.

Standard reconstruction workflows combine Structure-from-Motion (SfM) systems like COLMAP (Schonberger and Frahm 2016) with 3D Gaussian Splatting (3DGS) (Kerbl et al. 2023) to build sparse point clouds from feature correspondences and initialise anisotropic 3D Gaussian representations for novel view synthesis. These pipelines assume static and geometrically coherent scenes. However, AI-generated videos violate this assumption on multiple fronts. Locally, dynamic objects disrupt feature matching, causing dropped frames, inaccurate pose estimates, and severe reconstruction artifacts: ghosting, duplicated structures, and floating geometry where the optimisation attempts to reconcile irreconcilable viewpoints. Globally, the low contrast, unstable lighting dynamics, and temporal micro-hallucinations inherent to diffusion outputs cause SfM to fail in extracting sufficient stable features, leading to geometric collapse and the omission of entire macro-structures during 3DGS initialization.

We evaluate a suite of complementary strategies to mitigate these failures, targeting both local dynamic object removal and global scene stabilization. For local dynamic object removal, we compare three approaches: raw baseline reconstruction, 2D heuristic correction using YOLOv8 and ProPainter (Zhou et al. 2023), and causal scene rewriting with VOID (Motamed et al. 2026), which leverages vision-language models to reason about counterfactual scene physics and synthesise backgrounds accounting for downstream physical interactions. For global geometric stabilisation, we introduce a preprocessing pipeline combining Contrast Limited Adaptive Histogram Equalization (CLAHE) (Zuiderveld 1994) and Bilateral Filtering (Barron and Poole 2016) to robustly enhance structural features while suppressing generative noise. Quantitative metrics (PSNR, SSIM, LPIPS) and qualitative analysis assess which approach best bridges the gap between unconstrained 2D generation and the spatial coherence required for robust 3DGS reconstruction.

We conducted a series of controlled experiments to evaluate preprocessing strategies across two distinct domains: local dynamic object removal and global scene stabilisation. For dynamic object removal, three scenarios evaluated videos with varying physical interaction levels: a jumping dirtbike with minimal shadows, a moving car with prominent shadows and reflections, and the same car processed using interaction-aware causal scene rewriting.

The dirtbike scenario compared three pipelines: (1) raw baseline, (2) YOLOv8 masking during COLMAP feature extraction only, and (3) YOLOv8 masking with ProPainter inpainting for 3DGS training. The mask-only approach prevented the bike from appearing in the final scene but introduced severe artifacts.

The car scenario tested whether 2D heuristic correction could eliminate secondary physical effects. We compared the raw baseline against YOLOv8 masking with ProPainter inpainting, and SAM segmentation with 5% mask dilation before ProPainter inpainting.

For causal scene rewriting, we applied VOID (Motamed et al. 2026), which shifts from traditional video inpainting to interaction-aware counterfactual synthesis. While conventional inpainting models infill missing background pixels and correct photometric artifacts, they cannot model the physical consequences of object removal (halted collisions, free-fall dynamics, or structural dependencies). VOID addresses this by leveraging a Vision-Language Model (VLM) to generate a "quadmask" categorising the scene into four regions: the object to be removed, downstream areas affected by its removal, their overlapping regions, and the preserved static background.

VOID’s generation process can operate in two passes: a diffusion transformer backbone that synthesises counterfactual trajectories modelling how scene physics should evolve in the object’s absence, and an optional flow-warped noise stabilisation pass that preserves rigidity and temporal coherence. Trained on paired counterfactual datasets from Kubric and HUMOTO, VOID learns physical priors for rigid-body dynamics and human-object interactions. In our experiments, we utilised only the first pass (diffusion transformer backbone) without the optional second-pass stabilisation.

For global scene stabilisation, a fourth experiment evaluated an AI-generated industrial loft exhibiting static background instabilities, such as shifting reflections and fluctuating textures. We implemented a dual-stage 2D preprocessing pipeline designed to separate geometric anchoring from photometric optimisation. First, Contrast Limited Adaptive Histogram Equalization (CLAHE) was applied to the original frames. The CLAHE clipping limit was tuned to enrich structural details and local contrasts without amplifying generative noise into unmatchable artifacts. These enhanced frames were fed exclusively into COLMAP to extract a robust and dense 3D point cloud. Second, to initialise the 3DGS optimisation, the original frames were processed using a Bilateral Filter (d = 7, σcolor = 75.0, σspace = 75.0). This step aimed to homogenise noisy surface areas and alleviate view-dependent lighting ambiguities while strictly preserving sharp object boundaries. The final 3DGS model was therefore trained by projecting the smoothed bilateral frames onto the dense geometric scaffold extracted via CLAHE. It should be noted that both CLAHE and Bilateral Filtering operate purely as frame-by-frame 2D spatial heuristics and do not natively enforce temporal consistency across the video sequence.

Reconstructions were evaluated using quantitative metrics (PSNR, SSIM, LPIPS) alongside qualitative visual inspection. Table 1 summarises the findings for local dynamic object removal, while Table 2 details the global scene stabilisation experiment.

| Method | LPIPS ↓ | Std Dev |

|---|---|---|

| Dirtbike (Minimal Interaction) | ||

| Baseline | 0.0861 | 0.0164 |

| Masked (COLMAP only) | 0.3545 | 0.0743 |

| Masked + Inpainted | 0.3157 | 0.0511 |

| Car (Significant Interaction) | ||

| Baseline | 0.1690 | 0.0260 |

| Masked + Inpainted (5%) | 0.1646 | 0.0170 |

| Car (Causal Rewriting) | ||

| Baseline | 0.1690 | 0.0260 |

| VOID | 0.0726 | 0.0068 |

Dirtbike. Masking during COLMAP prevented the dirtbike from appearing in the final reconstruction but produced the worst perceptual quality, as shown in Figure 1. The missing object created severe holes that 3DGS struggled to reconcile. Adding ProPainter inpainting improved results by filling masked regions (Figure 2), but hallucinated textures introduced multi-view inconsistencies. The raw baseline performed best quantitatively despite containing ghosting from the moving dirtbike.

Car. The baseline baked moving shadows into the 3DGS geometry. Both YOLOv8 and SAM-based 2D correction pipelines produced marginal improvement, as shown in Figure 3. Dilation reduced some artifacts, but neither approach eliminated the car’s physical interactions, leaving residual shadow motion that corrupted reconstruction. Standard 2D inpainting lacks the causal reasoning required for complex physical effects.

Car VOID. Causal scene rewriting produced the strongest quantitative improvement: a 57% reduction in LPIPS. Figure 4 shows the physically consistent background synthesis. Qualitative inspection, though, revealed that VOID introduced its own temporal and structural artifacts. Because we used only the first-pass diffusion transformer without the optional flow-warped noise stabilisation, these artifacts likely stem from the structural deformation and morphing issues that the second pass is designed to address. The underlying video diffusion models generate frames without explicit 3D constraints, ultimately suffering from geometric inconsistency similar to raw generative videos.

| Metric | Baseline | CLAHE+Bilateral |

|---|---|---|

| Extracted 3D Points ↑ | 2,342 | 9,158 |

| Reprojection Error ↓ | 0.9612 | 0.9635 |

| PSNR ↑ | 25.09 dB | 34.70 dB |

| SSIM ↑ | 0.9169 | 0.9531 |

| LPIPS ↓ | 0.2229 | 0.2213 |

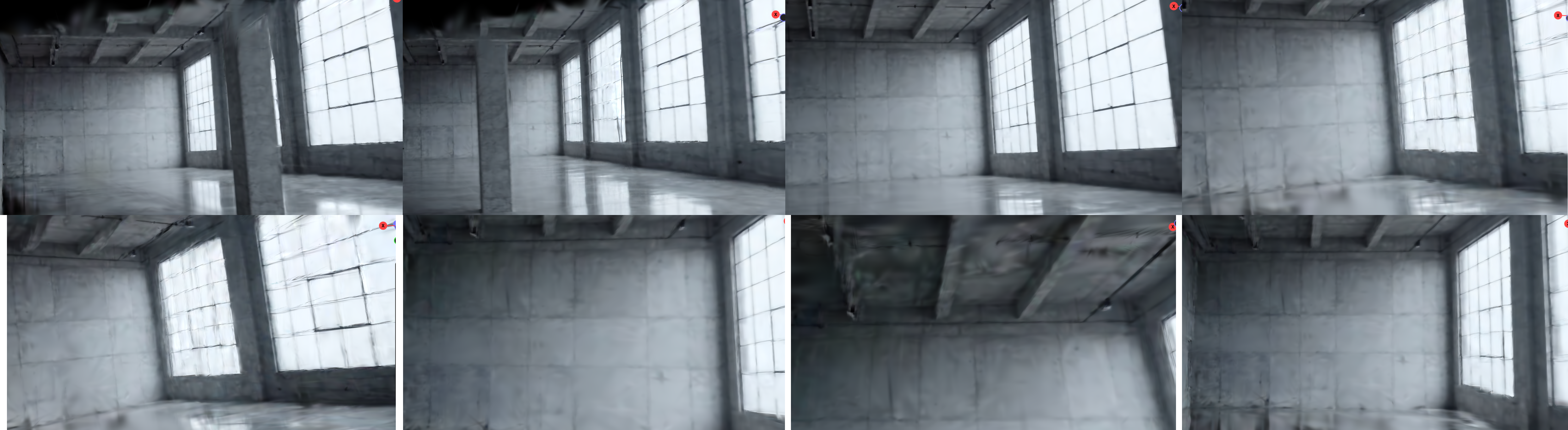

Industrial Loft (Global Stabilisation). Table 2 demonstrates that the raw baseline suffered a severe geometric collapse due to the generative noise, extracting only 2,342 points. The dual-stage preprocessing pipeline successfully stabilised the scene, increasing the extracted 3D spatial anchors by nearly 290% (9,158 points) while maintaining sub-pixel reprojection accuracy. This robust geometric foundation translated to a massive +9.61 dB improvement in PSNR (from 25.09 dB to 34.70 dB) and higher structural similarity (SSIM). The LPIPS scores remained comparable; this is expected, as the neural metric penalises the absence of high-frequency generative noise that was intentionally smoothed by the Bilateral Filter.

Qualitative inspection visually confirms these metrics (Figure 5). In the baseline model, the lack of spatial anchors caused prominent macro-structures, such as a structural column, to completely disappear, rendering as amorphous fog due to ghosting artifacts. The preprocessed model successfully reconstructed these solid topologies. However, view-dependent ambiguities, particularly specular reflections on the floor, disrupted the photometric optimisation in both models, highlighting a shared limitation.

Finally, off-path navigation was evaluated using the SuperSplat viewer (Figure 6). Only the improved model is visualised; the baseline model generated extreme spatial outliers (floaters) during initialisation, causing a bounding box explosion that rendered the scene disproportionately scaled and unnavigable in standard 3D viewers.

Dynamic objects violate the geometric constraints essential for SfM-based reconstruction, producing ghosting and floaters. 2D heuristic corrections using YOLOv8 and ProPainter removed primary objects but failed to address physical interactions like shadows, corrupting 3D geometry. Masking during COLMAP without inpainting produced the worst results, creating irreconcilable geometric holes.

Causal rewriting via VOID achieved substantial quantitative gains (a 57% LPIPS improvement) by simulating physically plausible counterfactual backgrounds. Even with only the first-pass diffusion transformer (omitting the optional flow-warped noise stabilisation), VOID outperformed 2D heuristic approaches. The observed temporal artifacts likely reflect the structural deformation inherent to lightweight video diffusion models and could potentially be mitigated by the second-pass stabilisation. Still, VOID’s reliance on unconstrained video diffusion means it generates frames without explicit 3D geometric representations.

Parallel to the challenges posed by dynamic objects, our evaluation of AI-generated static backgrounds revealed that generative noise and structural inconsistencies cause severe geometric collapse during SfM. Our dual-stage preprocessing pipeline demonstrated that decoupling geometric anchoring (via CLAHE) from photometric optimisation (via Bilateral Filtering) can prevent the extreme spatial outliers and bounding box explosions observed in the baseline. However, while this approach increased extracted 3D spatial anchors by nearly 290% and quantitatively improved PSNR by 9.61 dB, the qualitative visual results remain fundamentally compromised. Operating purely as 2D spatial heuristics, these filters cannot synthesize missing 3D information or resolve inherent view-dependent ambiguities, such as inconsistent specular reflections caused by unconstrained generative lighting. Consequently, the final reconstructed views, despite avoiding complete SfM failure, still exhibit pronounced blurring, structural artifacts, and a lack of true spatial coherence.

Ultimately, post-hoc corrections (whether through masking, 2D inpainting, single-pass diffusion rewriting, or 2D spatial filtering) remain insufficient for universally robust 3DGS reconstruction. Multi-view consistency requires methods that natively integrate 3D constraints during generation. Frameworks with explicit 3D memory could enforce geometric and temporal coherence throughout the generative process rather than attempting repairs after violations occur.